Stop Asking What Will Happen

The Seven Questions Leaders Should Ask When the Future Is Unknowable

By Dr. Barbara L. Van Veen - FuturistBarbara.com

February 23, 2026

The Forecast Trap

Ask a hundred executives to predict next quarter's revenue, and most will give you a number. Ask them how confident they are, and they'll tell you they're 80% sure the actual result will fall within a certain range. Now here's the problem: when researchers tracked over 6,500 of these forecasts from chief financial officers, the actual outcomes fell outside those "80% confident" ranges roughly 60% of the time.

This isn't a story about bad data or poor analysis. It's a story about a fundamental misdiagnosis that shapes—and often undermines—strategic decision-making in organizations worldwide. Leaders treat uncertainty as a forecasting problem when it's actually a design problem. They reach for precision when they should be building resilience. And in doing so, they commit their organizations to paths that become increasingly difficult to escape.

The evidence of this pattern extends far beyond finance. Marketing managers consistently express excessive confidence in their predictions of customer behavior. Operations teams systematically underestimate project timelines and costs. Infrastructure projects routinely exceed their budgets because planners anchor on initial estimates and then escalate commitment as reality diverges from the forecast. The pattern is so consistent that it reveals something deeper than individual error—it reveals a systematic organizational failure to match decision-making tools to the nature of uncertainty itself.

The Idea in Brief

Most strategic mistakes do not come from poor analysis. They come from asking the wrong question about the future.

When uncertainty increases, leaders instinctively ask for better forecasts: more data, better models, sharper predictions. But many of the most consequential decisions—technological transitions, regulatory shifts, geopolitical changes—cannot be resolved by forecasting alone. The issue is not prediction. It is diagnosis.

Before deciding what to do, leaders need to understand the nature of the uncertainty they face. Is the future actually predictable? What assumptions are we relying on? Which signals suggest the system may be shifting?

The Seven Diagnostic Questions were developed for exactly this moment: when waiting for certainty is impossible, but acting blindly is dangerous.

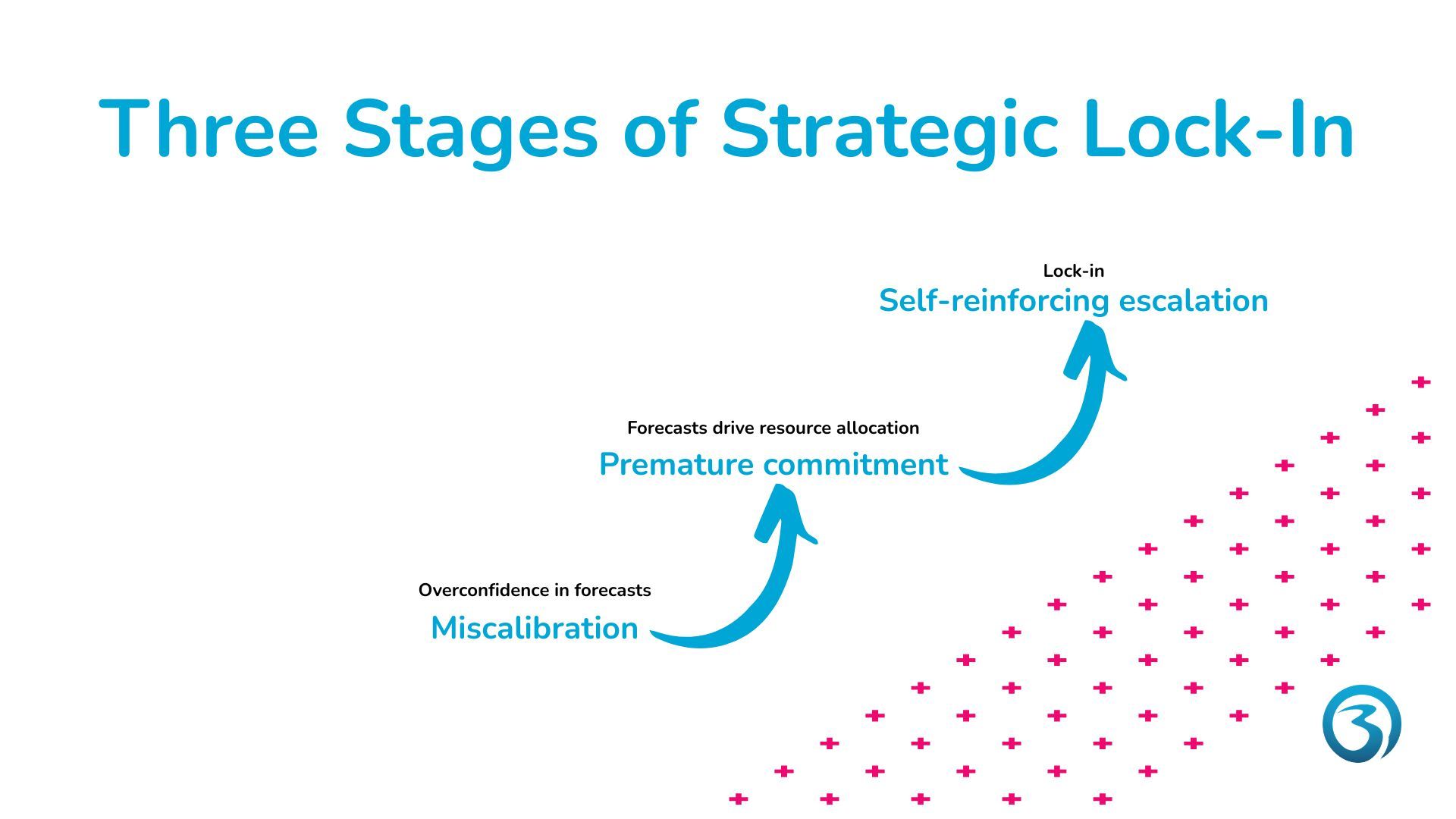

Take Me To the QuestionsThe Three Stages of Strategic Lock-In

Understanding how organizations become trapped by their own forecasts requires seeing the process unfold in three distinct but reinforcing stages.

Stage one is miscalibration. Leaders don't just make predictions—they express false precision about them. When executives say they're "80% confident," they're typically far more uncertain than they realize. This isn't because they lack information. It's because they systematically overestimate their ability to predict outcomes. Research across multiple domains shows that managers produce confidence intervals that are far too narrow, treating inherently uncertain futures as if they were merely risky.

The roots of this miscalibration lie in how people process success and failure. When forecasts prove accurate, leaders credit their own skill. When forecasts miss, they blame external factors—bad luck, market volatility, unforeseen events. This attribution pattern creates a feedback loop: each perceived success increases confidence in future predictions, making forecasts progressively more optimistic and more precise, even as the underlying uncertainty remains unchanged.

Stage two is premature commitment. Overconfident forecasts don't just sit in spreadsheets—they drive resource allocation. Organizations commit capital, hire staff, enter markets, and build infrastructure based on predictions that carry an illusion of certainty. These commitments become especially problematic because they're often irreversible. Once you've built the factory, hired the team, or announced the strategy publicly, the costs of changing course multiply rapidly.

Organizations amplify individual overconfidence through structural factors. Functional departments have incentives to present optimistic forecasts to secure resources. Budget processes create mental accounts that anchor subsequent decisions. Public forecasts generate reputational stakes that make leaders reluctant to revise their views. A supply chain planning study documented how these functional biases systematically distorted organizational forecasts, even after the company implemented coordination processes designed to reduce them. The biases didn't disappear—they just took new forms.

Stage three is self-reinforcing escalation. Once committed, organizations find it remarkably difficult to change course. Sunk costs loom large in decision-making, even though economic logic says they shouldn't matter. Budget frames and public statements create psychological accounts that make continuation feel necessary. Leaders who "stay the course" are often perceived as more trustworthy than those who adapt, creating reputational incentives for escalation even when evidence suggests the initial forecast was wrong.

This escalation dynamic explains why infrastructure projects systematically overrun their budgets, why failing ventures receive continued funding, and why strategic initiatives persist long after their underlying assumptions have been violated. The forecast becomes a commitment device, and the commitment becomes a trap.

When the Tool Doesn't Match the Task

The forecast-commitment-escalation cycle is damaging in any environment, but it becomes especially dangerous when leaders apply forecasting tools to situations where forecasting fundamentally cannot work.

Nearly a century ago, economists drew a crucial distinction between two types of uncertainty. The first type—risk—involves unknown outcomes but known probabilities. You don't know whether the coin will land heads or tails, but you know it's 50-50. The second type—fundamental uncertainty—involves situations where even the probabilities are unknowable because future states depend on contingencies that don't yet exist or causal structures that are changing.

Consider the difference between predicting tomorrow's weather and predicting the social impact of artificial intelligence over the next decade. Weather forecasting works because atmospheric physics is well-understood and historical patterns provide reliable training data. AI's societal impact is fundamentally uncertain because it depends on technological breakthroughs that haven't occurred, regulatory decisions that haven't been made, and social adaptations that haven't emerged. No amount of data or analysis can convert the second problem into the first.

Yet managers routinely apply forecasting tools to deeply uncertain environments. They build five-year plans for emerging markets where regulatory environments are in flux. They forecast revenue from products that depend on technologies still in development. They project market share in industries being disrupted by competitors they can't yet identify. And crucially, they express precision about these forecasts—narrow confidence intervals, specific targets, detailed timelines—that the underlying uncertainty cannot support.

This creates what might be called a "double failure": using the wrong tool (forecasting) with excessive confidence (miscalibration) in contexts where both errors compound. When you forecast under deep uncertainty, you're not just likely to be wrong—you're likely to be wrong in ways you can't anticipate, making your confidence intervals meaningless. When you commit resources based on these forecasts, you're locking your organization into paths optimized for futures that won't occur. And when you escalate commitment to defend those forecasts, you're preventing the very adaptation that deep uncertainty demands.

When organizations attempt to forecast their way through uncertainty, strategies tend to become fragile. What they need instead is a way to structure thinking about the unknown.

A Familiar Pattern: The Automotive Transition

The past fifteen years in the automotive industry provide a useful illustration.

At various moments, analysts were confident about several different futures:

- Diesel would remain dominant in Europe.

- Hybrid vehicles would be the long-term transition.

- Hydrogen fuel cells would arrive soon.

- Battery electric vehicles would stay niche.

Each of these projections was supported by credible analysis at the time. Yet the system evolved differently. Regulatory pressure intensified after the diesel emissions scandal. Battery costs fell faster than expected. China accelerated electric vehicle adoption. Governments announced phase-out dates for combustion engines. Charging infrastructure expanded unevenly but persistently (IEA, 2023; ICCT, 2020).

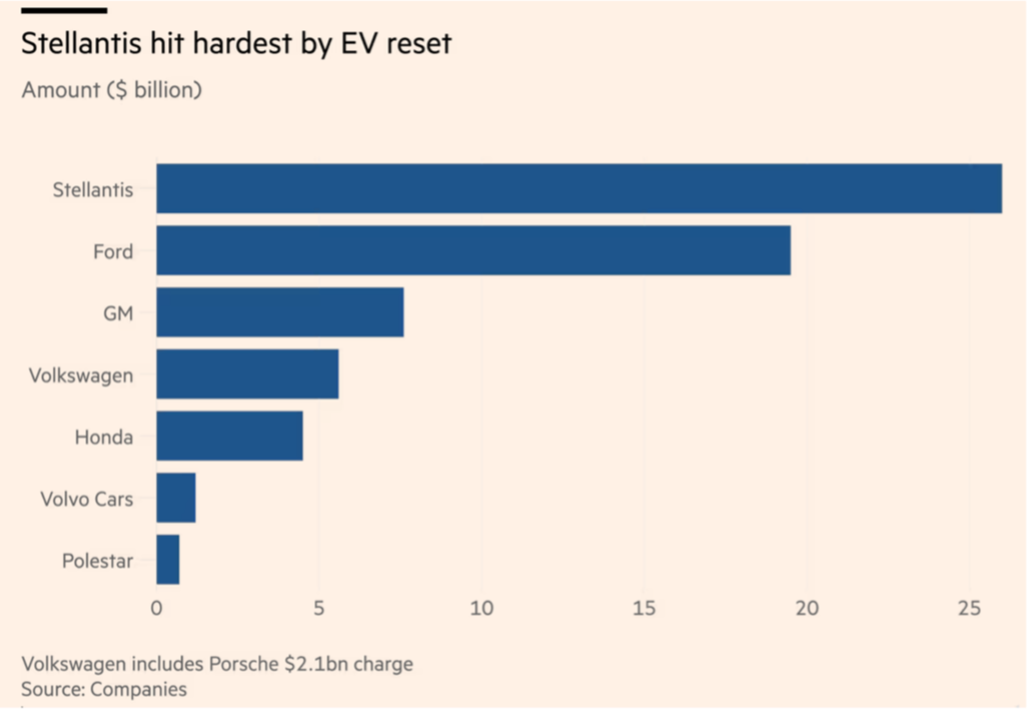

Example of the cost of wrong forecasts: a write-off of at least $65bn for the global car industry in 2025, when electric vehicle ambitions had to be reversed due to a pivot in climate policies by the Trump government.

None of these developments were unimaginable. But their interaction—and especially their timing—proved difficult to forecast.

What the automotive transition shows is not that forecasting is useless. It shows that strategy often unfolds in environments where forecasting alone cannot provide a stable foundation.

Why Smart Organizations Still Get Surprised

The forecast trap has another pernicious effect: it creates organizational blindness to signals that contradict the forecast.

Research examining 139 proof-of-concept projects found that organizations routinely dismissed early warning signals as anomalies or noise. Without deliberate processes to amplify weak signals—to escalate them from front-line observers to decision-makers, to connect them across functional silos, to interpret them in context—these early warnings simply disappeared. Leaders were blindsided by changes they could have seen coming, not because the signals weren't there, but because their commitment to existing forecasts made them unable to see.

This blindness extends beyond missed opportunities. It also obscures system-level effects and unintended consequences. Forecasting models typically assume linear relationships: pull this lever, get that result. But organizational and market systems are complex, with feedback loops, time delays, and non-linear interactions. Interventions produce second-order effects that weren't in the forecast. Performance metrics get gamed. Incentive systems backfire. Competitors respond in unexpected ways.

The supply chain case mentioned earlier illustrates this perfectly. The company implemented a consensus forecasting process to reduce bias. It worked—partially. But it also created new biases as functions learned to game the consensus process, and it obscured signals that didn't fit the agreed-upon forecast. The system adapted in ways the designers hadn't anticipated, producing a new set of distortions that were harder to see precisely because everyone believed the forecasting problem had been solved.

The automotive sector illustrates this clearly. Investments in diesel technologies created engineering capabilities, supplier networks, and political expectations that reinforced that path. When the environment changed, those commitments had to be adjusted more slowly and at greater cost.

This combination—confidence in forecasts plus structural commitment—explains why intelligent organizations continue to be surprised.

The Problem Isn’t Planning. It’s Diagnosis

Many companies already use sophisticated strategic tools, such as forecasting models, scenarios, technology roadmaps, and real options analysis. Yet surprises persist. The issue is not a lack of analytical methods. It is that organizations often apply the wrong method to the wrong type of uncertainty.

- Forecasting works when probabilities can be estimated.

- Scenario exploration helps when several distinct futures are plausible.

- Adaptive strategies are useful when commitments must evolve over time.

Organizations rarely pause to determine which situation they are in. Instead, they default to forecasting.

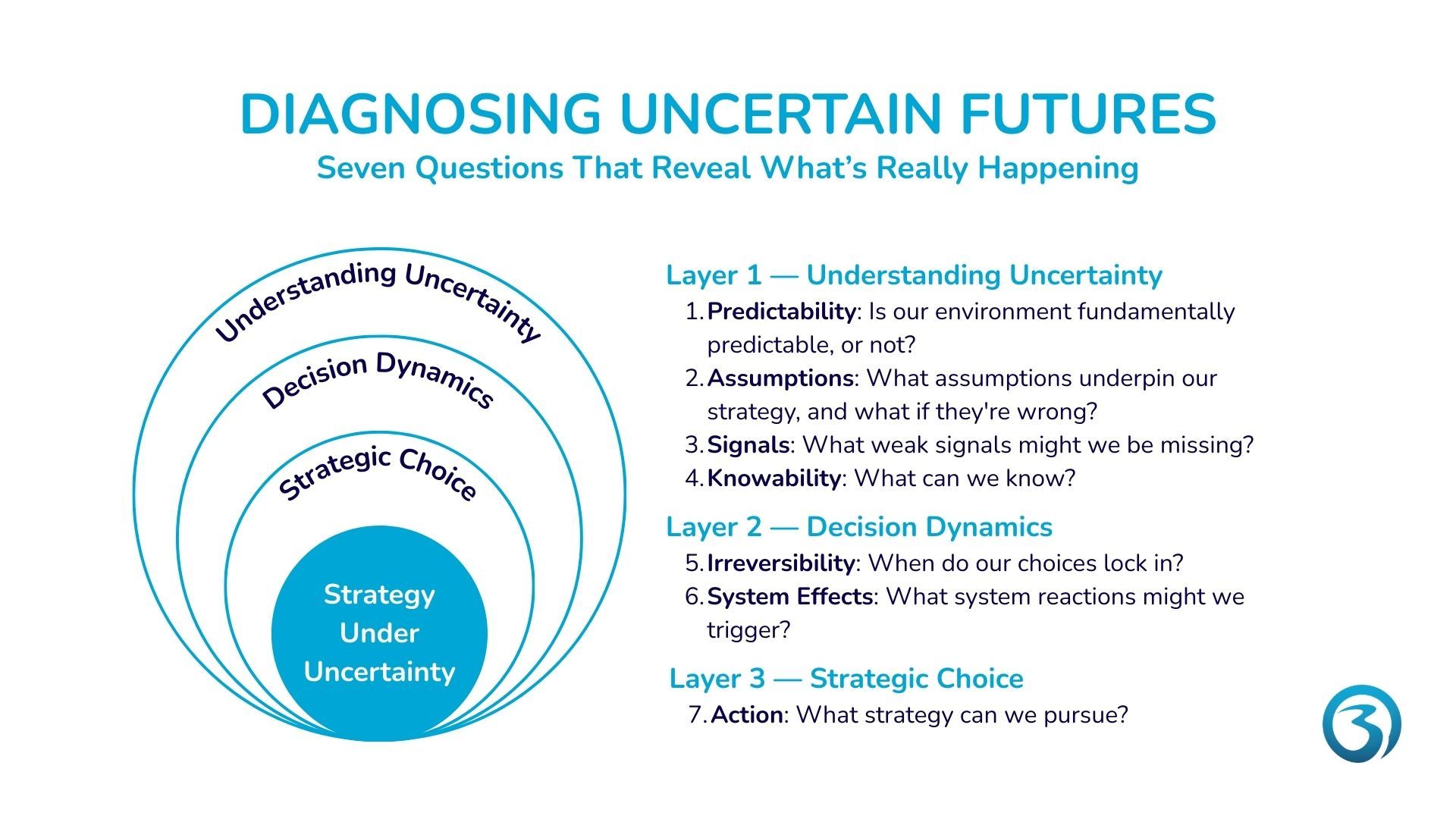

If forecasting is the wrong frame for truly uncertain environments, what's the alternative? The research points toward a different approach—one that treats uncertainty not as a prediction problem but as a strategic design challenge. This approach begins with seven diagnostic questions that help leaders match their decision-making process to the type of uncertainty they actually face.

The Seven Questions

1. Is our environment fundamentally predictable, or not?

2. What assumptions underpin our strategy, and what if they're wrong?

3. What weak signals might we be missing?

4. What can we know?

5. How irreversible is this decision?

6. What system reactions might we trigger?

7. What strategic approach can we best pursue?

Tip

If you want to assess the uncertainty in your organizational environment, use the executive on strategy when uncertainty is high. Its first module is free.

Practical Guidance for Leaders

Implementing this diagnostic approach requires concrete changes in how organizations make decisions.

- Shift from forecasting to strategy testing. Don't ask "What will happen?" Ask "What could happen, and will our strategy work?" Use scenario planning not to predict the future but to stress-test your strategy against multiple plausible futures. If your strategy fails in several scenarios, redesign it for robustness rather than refining your forecast.

- Make assumptions explicit and monitorable. Document the critical assumptions underlying your strategy. For each assumption, define observable indicators that would signal it’s being violated. Treat these indicators as triggers for strategic review, not as threats to defend against. When assumptions fail, update your strategy—don't escalate your commitment.

- Build amplification processes for weak signals. Create formal mechanisms for frontline employees to escalate observations that don't align with the plan. Protect these channels from being dismissed as "not our focus" or "outside our forecast." Assign responsibility for synthesizing weak signals across functions. Link your scanning processes to your scenario frameworks, so signals have context.

- Design for reversibility and staged commitment. Before making irreversible commitments, ask whether you can structure the decision to preserve options. Can you pilot before scaling? Can you build in modular stages rather than all-at-once? Can you negotiate contractual flexibility? The goal is to reduce the penalty for being wrong, not to increase the precision of being right.

- Counter organizational overconfidence. Require confidence intervals, not point estimates—and then track calibration over time. Use independent forecasting teams to reduce functional bias. Implement rule-based triggers for project termination to counter escalation dynamics. Make it safe to say "our forecast was wrong" by celebrating learning rather than punishing error.

- Commit to monitoring and adaptation. Define in advance what would cause you to change course. These triggers should be specific, observable, and tied to your critical assumptions. When triggers fire, convene a strategic review. The goal isn't to predict when you'll need to adapt—it's to be ready to adapt when the need emerges.

A More Useful Standard for Strategy

The research is unambiguous: forecast overconfidence is dangerous precisely because it occurs in environments where forecasting fails. Leaders who recognize the limits of prediction—who distinguish between what can be forecasted and what must be explored—make better decisions under uncertainty.

This doesn't mean abandoning analysis. It means reframing it. Analysis should identify vulnerabilities, not produce false precision. It should surface assumptions, not hide them. It should test strategies across multiple futures, not optimize for a single forecast. It should design flexibility, not justify commitment.

The shift from prediction to design is ultimately a shift in how leaders think about their role. Predictors try to be right about the future. Designers try to be ready for multiple futures. Predictors seek precision. Designers seek resilience. Predictors commit early based on forecasts. Designers preserve optionality and adapt as uncertainty resolves.

In a world of true uncertainty, the question isn't "What will happen?" The question is "What could happen, and are we prepared?" Leaders who ask the right question—and build organizations capable of answering it—don't need perfect forecasts. They need robust strategies, explicit assumptions, amplified signals, staged commitments, and the willingness to adapt. They need to diagnose uncertainty correctly: not as a forecasting problem, but as a strategic design challenge.

The forecast trap is real, pervasive, and costly. But it's not inevitable. With the right diagnostic questions and the right decision-making practices, leaders can escape the trap and navigate uncertainty with clarity, flexibility, and confidence—not in their predictions, but in their ability to adapt.

Next Step: Go Deeper

You came across the diagnostic questions while browsing. But their real value becomes apparent when you apply them to a real strategic decision.

In the executive brief Strategy When Uncertainty Is High, you will learn how to:

- Diagnose the level of uncertainty in your organizational environment

- Choose a strategy that remains viable even if you're wrong about what's changing and when

- Identify the early warnings of changes in your organizational environment

- Inform your stakeholders about uncertainty without worrying them

The first module is free.

Continue Learning

Executive Brief

Strategy When Uncertainty Is High

A practical introduction to diagnosing uncertainty and subsequent strategic action

The full price is €129 ex. VAT. It will be charged only once you decide to upgrade.

Barbara van Veen (Barbara @ FuturistBarbara.com)

is an independent researcher and educator on the intersection of strategic foresight, uncertainty, and emerging technologies

References

This article draws on peer-reviewed research examining forecast overconfidence, escalation of commitment, and strategic decision-making under deep uncertainty.

On Forecast Overconfidence and Miscalibration:

- Ben-David, I., Graham, J. R., & Harvey, C. R. (2013). Managerial miscalibration. Quarterly Journal of Economics, 128(4), 1547–1584. doi.org/10.1093/qje/qjt023

- Durand, R. (2003). Predicting a firm's forecasting ability: The roles of organizational illusion of control and organizational attention. Strategic Management Journal, 24(9), 821–838. doi.org/10.1002/smj.339

- Hilary, G., & Hsu, C. (2011). Endogenous overconfidence in managerial forecasts. Journal of Accounting and Economics, 51(3), 300–313. doi.org/10.2139/ssrn.1748423

- Hribar, P., & Yang, H. (2016). CEO overconfidence and management forecasting. Contemporary Accounting Research, 33(1), 204–227. doi.org/10.2139/ssrn.929731

- Kahneman, D., & Lovallo, D. (1993). Timid choices and bold forecasts: A cognitive perspective on risk taking. Management Science, 39(1), 17–31. doi.org/10.1017/cbo9780511803475.023

- Libby, R., & Rennekamp, K. (2012). Self-serving attribution bias, overconfidence, and the issuance of management forecasts. Journal of Accounting Research, 50(1), 197–231. doi.org/10.1111/j.1475-679x.2011.00430.x

- Mahajan, J. (1992). The overconfidence effect in marketing management predictions. Journal of Marketing Research, 29(3), 329–342. doi.org/10.2307/3172743

On Escalation and Commitment:

- Heath, C. (1995). Escalation and de-escalation of commitment in response to sunk costs: The role of budgeting in mental accounting. Organizational Behavior and Human Decision Processes, 62(1), 38–54. doi.org/10.1006/obhd.1995.1029

- Love, P. E. D., Ika, L. A., & Sing, M. C. P. (2019). Does the planning fallacy prevail in social infrastructure projects? Empirical evidence and competing explanations. IEEE Transactions on Engineering Management, 68(2), 391–404. doi.org/10.1109/tem.2019.2944161

- Staw, B. M., & Ross, J. (1989). Behavior in escalation situations: Antecedents, prototypes, and solutions. Science, 246(4972), 216–220. doi.org/10.1126/science.246.4927.216

On Organizational Forecast Bias:

- Oliva, R., & Watson, N. (2009). Managing functional biases in organizational forecasts: A case study of consensus forecasting in supply chain planning. Production and Operations Management, 18(2), 138–151. doi.org/10.1111/j.1937-5956.2009.01003.x

On Weak Signals and Scanning:

- Shankar, R., & Giones, F. (2023). Building hyper-awareness: How to amplify weak external signals for improved strategic agility. California Management Review, 65(4), 5–29. doi.org/10.1177/00081256231184912

On Fundamental Uncertainty and Strategic Approaches:

- Courtney, H., Kirkland, J., & Viguerie, P. (1997). Strategy under uncertainty. Harvard Business Review, 75(6), 67–79. hbr.org/1997/11/strategy-under-uncertainty

- Foss, N. J. (2023). Knightian uncertainty and the limitations of the Savage heuristic. European Management Review, 20(4), 626–631. doi.org/10.1111/emre.12623

- Knight, F. H. (1921). Risk, uncertainty, and profit. Houghton Mifflin.

On Assumption Surfacing and Adaptive Planning:

- Lempert, R. J., Popper, S. W., & Bankes, S. C. (2003). Shaping the next one hundred years: New methods for quantitative, long-term policy analysis. RAND Corporation. doi.org/10.7249/mr1626

- Mitroff, I. I., Emshoff, J. R., & Kilmann, R. H. (1979). Assumptional analysis: A methodology for strategic problem solving. Management Science, 25(6), 583–593. doi.org/10.1287/mnsc.25.6.583

- Walker, W. E., Haasnoot, M., & Kwakkel, J. H. (2013). Adapt or perish: A review of planning approaches for adaptation under deep uncertainty. Sustainability, 5(3), 955–979. doi.org/10.3390/su5030955